The claude code leak continues to trend across tech communities, but as of March 31, 2026, there is no verified evidence confirming that Anthropic’s Claude source code has been leaked or exposed.

Interest in this topic has surged in recent days, driven largely by online discussions and speculation. Despite the noise, no credible confirmation has emerged from trusted industry channels. The situation remains a case of widespread attention without factual backing.

Understanding Claude and Its Importance

Claude is a flagship artificial intelligence system created by Anthropic, a U.S.-based AI company known for its focus on safety and responsible AI deployment.

The model supports:

- Advanced conversational AI tools

- Coding and developer assistance

- Enterprise-level automation solutions

Its underlying architecture and training processes are tightly protected. These elements represent critical intellectual property in a highly competitive AI market.

Because of this, even unverified claims like a “claude code leak” quickly attract attention across the tech ecosystem.

No Confirmed Breach or Exposure

As of now, there is no confirmed indication of any security incident involving Claude.

Verified checks show no evidence of:

- Source code leaks

- Model weight exposure

- Internal system compromises

- Unauthorized access to Claude infrastructure

No official warnings or disclosures have been issued. Security researchers and industry observers have not validated any claims tied to leaked materials.

How the Rumor Gained Momentum

The rapid spread of the claude code leak discussion appears to be fueled by a mix of confusion and speculation.

Key drivers include:

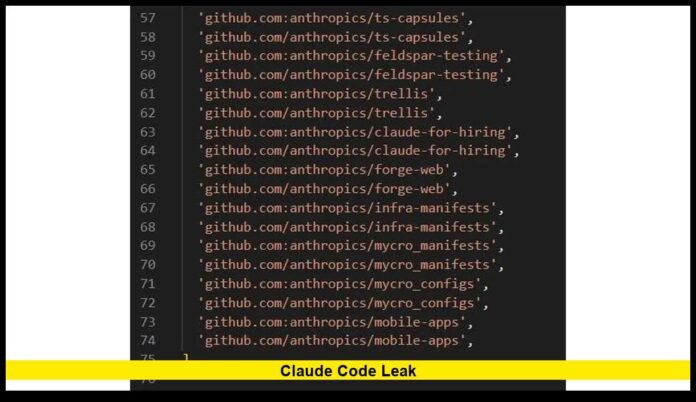

- Viral posts sharing unverified screenshots

- Mislabeling of publicly available AI code as “leaked”

- Growing curiosity about proprietary AI systems

- Ongoing debates around open-source versus closed AI models

In several cases, content presented as leaked code turned out to be unrelated or already public.

This pattern highlights how quickly misinformation can circulate in tech spaces.

Why a Leak Would Be a Major Event

Although no leak has been confirmed, the attention around this topic reflects how significant such an event would be.

If a real breach occurred, it could have wide-reaching consequences:

Intellectual Property Risk

Claude represents years of research. A leak could undermine Anthropic’s competitive position.

Security and Misuse Concerns

Access to internal systems could allow bad actors to manipulate or misuse AI capabilities.

Enterprise Impact

Many businesses rely on AI platforms. Trust could be affected if security were compromised.

Regulatory Pressure

U.S. regulators are already focused on AI oversight. A breach could accelerate policy action.

Anthropic’s Focus on AI Safety

Anthropic has consistently emphasized safety and control in its AI development strategy.

Key practices include:

- Restricted access to sensitive systems

- Structured deployment environments

- Continuous monitoring for vulnerabilities

- Emphasis on aligned AI behavior

There is no verified evidence that these safeguards have failed.

Separating Facts From Viral Claims

In fast-moving tech discussions, distinguishing truth from rumor is critical.

Here are clear indicators of unreliable claims:

- Lack of confirmation from official company channels

- No technical validation or reproducible proof

- Anonymous or unverifiable sources

- Files or code that match publicly available resources

Credible leaks typically include verifiable technical data and confirmation from recognized experts.

Bigger Picture: AI Security in Focus

The claude code leak discussion reflects a broader shift in how AI security is perceived.

As AI systems become more powerful:

- Security expectations are rising

- Companies are investing heavily in protection

- Public awareness of risks is increasing

Even unverified rumors now generate significant attention due to the importance of AI infrastructure.

What Developers and Users Should Keep in Mind

While there is no confirmed leak, caution remains important.

Recommended actions:

- Avoid downloading or sharing alleged leaked files

- Verify claims before accepting them as true

- Follow official updates from AI companies

- Use trusted platforms for AI tools

These steps help reduce exposure to misinformation and potential security risks.

Current Reality: Rumor, Not Confirmation

At this stage, the claude code leak remains unverified and unsupported by factual evidence. The discussion continues to spread, but no credible proof has emerged.

The situation shows how quickly speculation can gain traction in the AI space, especially when it involves high-profile systems.

Stay alert, question viral claims, and keep tracking confirmed updates as this topic develops.